I just read an annoying article in Boston Review: The Inflated Promise of Science Education. It was written by Catarina Dutilh Novaes, a Professor of Philosophy, and Silvia Ivani, a teaching fellow in philosophy. Their main point was that the old-fashioned way of teaching science has failed because the general public mistrusts scientists. This mistrust stems, in part, from "legacies of scientific or medical racism and the commercialization of biomedical science."

The way to fix this, according to the authors, is for scientists to address these "perceived moral failures" by engaging more with society.

Clearly, scientific education ought to mean the implanting of a rational, sceptical, experimental habit of mind. It ought to mean acquiring a method – a method that can be used on any problem that one meets – and not simply piling up a lot of facts."... science should be done with and for society; research and innovation should be the product of the joint efforts of scientists and citizens and should serve societal interests. To advance this goal, Horizon 2020 encouraged the adoption of dialogical engagement practices: those that establish two-way communication between experts and citizens at various stages of the scientific process (including in the design of scientific projects and planning of research priorities)."

George Orwell

This is nonsense. It has nothing to do with science education; instead, the authors are focusing on policy decisions such as convincing people to get vaccinations.

The good news is that the Boston Review article links to a report from Stanford University that's much more intelligent: Science Education in an Age of Misinformation. The philosophers think that this report advocates "... a well-meaning but arguably limited approach to the problem along the lines of the deficit model ...." where "deficit model refers to a mode of science communication where scientists just dispense knowledge to the general public who are supposed to accept it uncritically.

I don't know of very many science educators who think this is the right way to teach. I think the prevailing model is to teach the nature of science (NOS) [The Nature of Science (NOS)]. That requires educating students and the general public about the way science goes about creating knowledge and why evidence-based knowledge is reliable. It's connected to teaching critical thinking, not teaching a bunch scientific facts. The "deficit model" is not the current standard in science education and it hasn't been seriously defended for decades.

"Appreciating the scientific process can be even more important than knowing scientific facts. People often encounter claims that something is scientifically known. If they understand how science generates and assesses evidence bearing on these claims, they possess analytical methods and critical thinking skills that are relevant to a wide variety of facts and concepts and can be used in a wide variety of contexts.”National Science Foundation, Science and Technology Indicators, 2008

An important part of the modern approach as described in the Stanford report is teaching students (and the general public) how to gather information and determine whether or not it's reliable. That means you have to learn how to evalute the reliabiltiy of your sources and whether you can trust those who claim to be experts. I taught an undergraduate course on this topic for many years and I learned that it's not easy to teach the nature of science and critical thinking.

The Stanford Report is about the nature of science (NOS) model and how to implement it in the age of social media. Specifically, it's about teaching ways to evaluate your sources when you are inundated with misinformation.

The main part of this approach is going to seem controversial to many because it emphasizes the importance of experts and there's a growing reluctance in our society to trust experts. That's what the Boston Globe article was all about. The solutions advocated by the authors of that article are very different than the ones presented in the Sanford report.

The authors of the Standford report recognize that there's a widepread belief that well-educated people can make wise decisions based entirely on their own knowledge and judgement, in other words, that they can be "intellectually independent." They reject that false belief.

The ideal envisioned by the great American educator and philosopher John Dewey—that it is possible to educate students to be fully intellectually independent—is simply a delusion. We are always dependent on the knowledge of others. Moreover, the idea that education can educate independent critical thinkers ignores the fact that to think critically in any domain you need some expertise in that domain. How then, is education to prepare students for a context where they are faced with knowledge claims based on ideas, evidence, and arguments they do not understand?

The goal of science education is to teach students how to figure out which source of information is supported by the real experts and that's not an easy task. It seems pretty obvious that scientists are the experts but not all scientists are experts so how do you tell the difference between science quacks and expert scientists?

The answer requires some knowledge about how science works and how scientists behave. The Stanford reports says that this means acquiring an understanding of "science as a social practice." I think "social practice" is bad choice of terms and I would have preferred that they stick with "nature of science" but that was their choice."

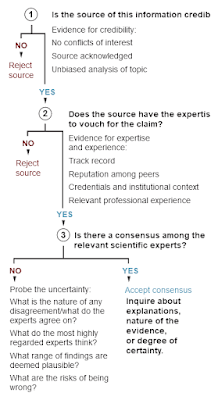

The mechanisms for recognizing the real experts relies on critical thinking but it's not easy. Two of the lead authors1 of the Stanford Report published a short synopsis in Science last month (October 2022) [https://doi.org/10.1126/science.abq8093]. Their heuristic is shown on the right.

The idea here is that you can judge the quality of scientific information by questioning the credentials of the authors. This is how "outsiders" can make judgements about the quality of the information without being experts themselves. The rules are pretty good but I wish there had been a bit more on "Unbiased scientific information" as a criterion. I think that you can make a judgement based on whether the "experts" take the time to discuss alternative hypotheses and explain the positions of those who disagree with them but this only applies to genuine scientific controversies and if you don't know that there's a controversy then you have no reason to apply this filter.

For example, if a paper is telling you about the wonderful world of regulatory RNAs and points out that there are 100,000 newly discovered genes for these RNAs, you would have no reason to question that if the scientists have no conflict of interest and come from prestigious universities. You would have to reply on the reviewers of the paper, and the journal, to insist that alternative explanations (e.g. junk RNA) were mentioned. That process doesn't always work.

There's no easy way to fix that problem. Scientists are biased all the time but outsiders (i.e. non-experts) have no way of recognizing bias. I used to think that we could rely on science journalists to alert us to these biases and point out that the topic is controversial and no consensus has been reached. That didn't work either.

At least the heuristic works some of the time so we should make sure we teach it to students of all ages. It would be even nicer if we could teach scientists how to be credible and how to recognize their own biases.

1. The third author is my former supervisor, Bruce Alberts, who has been interested in science education for more than fifty years. He did a pretty good job of educating me! :-)

8 comments :

I think part of the problem is disagreement over what counts as credible. There are people that feel Alex Jones is a credible source of information on scientific topics, that isn't solved by such a flowchart. The flowchart's method is good but only if there is already trust in scientists and scientific institutions.

I don't think things will change easily if science and scientists are seen as something "other." I think we need to build trust in scientific institutions but also need to make changes to society itself. For example, a bigger emphasis on the importance of evidence needs to be made throughout society and we should show that science is just formalising the same practices that everyone uses in their daily life.

I wrote a much longer essay on some of what I think we need to do to combat science denialism: https://evidenceandreason.wordpress.com/2022/09/19/structuring-society-to-counteract-science-denialism/

The public is not mistrustful of science etc because of medical racism etc etc, Thats an absurdity and loses these people credibility from the start. When was science received beterr? I see no problem. I see no disinformation. I do see incompetence and plain error.

for example origin subjects. We creationists see error and incompetence. So that means methodology fails. It must be drawing conclusions is always working on presumptions with the option they are wrong or there are more.

Speaking of countering misinformation, I see the product page for your book is on Amazon, unfortunately not available for preorder yet:

https://www.amazon.com/gp/product/148750859X

(Having followed Doug's link.) Typo in title: 99%

I was able to pre-order it at amazon.de I am looking forward to receiving it until May 31, 2023.

Strangely, the title displayed on the amazon.de pages says 99% of our genome is junk while the book cover says 90%.

The wrong number is also displayed at

https://www.amazon.co.uk/s?k=Wahat%27s+in+your+genome&crid=1G0O1AP7B3VJ&sprefix=wahat+s+in+your+genome%2Caps%2C93&ref=nb_sb_noss

I contacted the publisher and the subtitle has been corrected.

https://www.amazon.com/gp/product/148750859X

Will Larry’s upcoming book be offered in epub or Kindle formats?

Post a Comment